- Zero Trust

- AI Security

- Security Architecture

- ·

-

Jan 08, 2026

The Great Decoupling: From Writing Code to Designing the Loop

Something is shifting in how we build and protect systems. The center of gravity is moving - from writing logic to managing systems that generate it. What hit development is about to hit security. Are you ready?

Nikola Novoselec

Founder & Zero Trust Architect

Something is shifting in how we build and protect systems. The center of gravity is moving - from writing logic to managing systems that generate it.

What hit development is about to hit security. Are you ready?

AI-generated code doesn’t mean “hands-off.” The focus has shifted from the syntax of the line to the sanity of the system.

1. The Developer as “Forensic Administrator”

Let me start with what I’m seeing in development. If an AI can scaffold a memory-safe Rust parser in seconds, the “act of writing” is a commodity.

Context Management is the new Memory Management. For complex tasks spanning days or weeks, you must preserve long-term memory - not just curate truth sources, but ensure the agent doesn’t forget what it’s doing, how, or why. You are the Memory Management Unit. If you let the context window fill with stale information, the AI loses coherence. If you fail to persist critical decisions across sessions, you’re starting from scratch every time.

Engineering Mastery. Reading code isn’t enough. You need to understand the architecture behind the system - environmental constraints, technical debt, integration points, failure modes. You have to stay hands-on just to keep up with the velocity of the creation process unfolding before your eyes.

2. The Pivot: When The New Developer Reality Hits SecOps

Here’s where it gets uncomfortable. Everything that feels empowering in AI-driven development becomes dangerous when applied to security operations. Developers have time to curate context and review diffs. Security operators facing a ransomware event do not.

The reality that transformed how we build is now hitting how we protect - and the stakes are exponentially higher.

3. The Autonomy Paradox

This is the core tension we need to resolve. In a high-velocity incident, human review is a “latency bottleneck.” Wait 10 minutes to approve a remediation? The data is already gone.

You need autonomy, but you cannot grant it unsupervised. You must govern agents in real-time - providing Human-in-the-Loop (HITL) oversight that can pause, redirect, or approve without killing response speed.

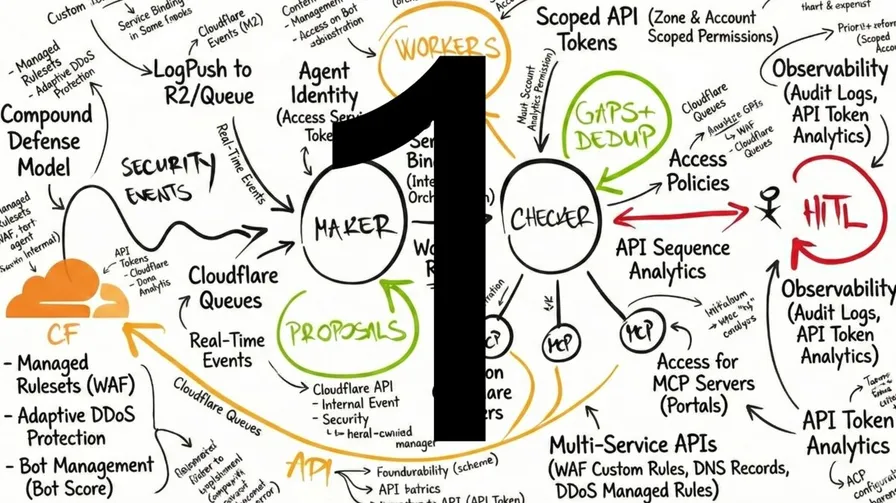

4. The “Maker-Checker” Architecture

So what’s the answer? The solution is a Dual-Agent architecture - the “Maker-Checker” pattern borrowed from banking compliance and dual-control governance:

The Remediation Agent (Maker): Fast and aggressive. It parses logs, correlates events, proposes and executes remediation - but only after Checker approval.

The Reviewer Agent (Checker): A specialized model running in an isolated context - logically separated from the raw, untrusted data the Maker ingests. Because it sits outside the execution loop, it has significantly reduced exposure to the same attack vectors. It doesn’t generate fixes - it checks intent against your policy fabric.

This is where Zero Trust becomes the great equalizer. Not because it’s another security layer, but because it fundamentally resets how we think about security architecture - flipping from managing exceptions to legacy rules toward managing multidimensional policies. The Reviewer enforces this policy fabric. It auto-approves low-risk tasks and only escalates to humans with a clear Decision Memo when it detects a policy conflict.

This is defense-in-depth, re-architected for the AI age.

Baselines over rules. Evidence, not hope.

5. Grounding It in Reality

Now let’s ground the architecture in reality. Where do you deploy agents that govern security policy and execute auto-remediation?

For latency-sensitive enforcement, the optimal deployment is where security happens and decisions are executed - “security needs to happen where the music is playing.” This means access to context through platform bindings, not external network calls that add latency and expand attack surface. We want execution as close to real-time as possible. This is why a modern Zero Trust platform must offer both compute and AI inference capabilities. Otherwise you’re either blind or slow - or both.

This isn’t a vendor preference - it’s an architectural constraint. For a critical infrastructure provider, this means remediation agents controlling edge security (north-south traffic) run on Cloudflare, utilizing native capabilities that let them exchange data through platform bindings - not APIs that can be compromised - while enforcing guardrails in real-time. Same principle for internal security governing east-west traffic: those agents need to run close to or on the microsegmentation platform that controls it, for observability and latency reasons.

While this is based on a specific technological reality, the architectural principle applies regardless of vendor.

Here’s what that looks like in practice:

AI Security Layer:

- AI Gateway Guardrails: Content moderation for both incoming prompts and outgoing responses

- MCP Server Portals: MCP servers provide the tooling that enables agents to orchestrate policy via API calls or IaC. These tools need the same identity-aware policy as everything else - only authenticated agents can access them and execute remediation. The same policy engine that governs access for users, devices, systems, and APIs also governs access to MCP servers and their tools.

Compute and Orchestration:

- Agents SDK: The

needsApprovalcallback pauses execution for Checker sign-off. If auto-remediated, the HITL escalation never triggers. If not, the Checker agent triggers a HITL escalation workflow. Yes, you read that right - agents escalating to agents, then to humans. First escalation is Agent-in-the-Loop or AITL (Maker to Checker), second is HITL (Checker to human). This works because the approval mechanism is purely programmatic - any authenticated client can deliver approval, whether human or agent. The Checker simply connects via direct agent-to-agent calls (RPC), evaluates against your policy fabric, and responds. - Durable Objects: Cloudflare’s stateful compute primitive - each agent gets its own isolated, persistent memory that survives restarts. Agents can hibernate for hours or days while waiting for approval, then resume exactly where they left off.

- Workers AI: Run separate models (Llama 8B for speed, 70B for reasoning) in isolated contexts

Observability:

- Logpush: Real-time streaming of every AI decision to your SIEM or data lake for forensic auditing

- Queues: Guaranteed delivery notification system - when an agent needs human attention, the escalation always gets through

The Bottom Line

Here’s what it comes down to. The future of engineering isn’t about being “in the loop” - it’s about designing the loop.

We’ve moved from debugging syntax to debugging the translation of intent to action. The question is whether your policy fabric is strong enough to govern the agents you’re about to deploy.

Prêt à transformer votre posture de sécurité ?

Que vous ayez besoin d'un Zero Trust Maturity Assessment, d'une revue d'architecture de sécurité ou de conseils sur l'intégration de l'IA dans vos opérations - discutons de votre situation spécifique. Pas de processus de vente. Pas d'entretiens préliminaires avec des juniors. Une conversation directe sur vos défis.

Ans

De l'évaluation à l'architecture jusqu'à l'implémentation

Secteurs

Logistique, transports, finance, secteur public

Indépendant

Aucun partenariat. Aucune commission. Vos intérêts uniquement.